HPOL5000 is a core unit in the Master of Public Health program at the University of Sydney. Anne Marie Thow and I co-coordinate the unit, which covers introductory health policy and health economics.

Semester 1 2020 started on the 17th of February and we were excited to have a large cohort of nearly 300 students. The unit runs with two concurrent modes of study:

- online (remote) learning, where students watch online lecture material, access reading material online and then participate in asynchronous tutorial activities via discussion boards, or

- block mode (face-to-face) learning, where students access reading materials and some pre-recorded lectures online, but also attend two full day workshops of lectures and activities, and 6 x 1.5 hour face-to-face tutorial groups.

The first few weeks of semester went well, with great participation in online introductory activities, and the first face-to-face workshop day for block mode students running smoothly. We had a small cohort of international students who couldn’t travel to Australia to start the semester due to COVID19, so we set up some special online (asynchronous) tutorial groups for them to attend in the meantime.

In week 3 we were advised to prepare, just in case teaching needed to move online. In week 4 this was confirmed – due to COVID19 pandemic restrictions all teaching activities must now be online. This gave us one week to move the 2nd workshop day (held in week 5, and focussed on health economics content) online, as well as work out how to manage the rest of the semester.

Overall, I think the 2nd workshop ran well online, although it was a lot of work to set up. I learned a lot that I will use to improve future workshops, whether they are held online or face to face (or a combination) and thought it might be worth documenting what I did and how it worked.

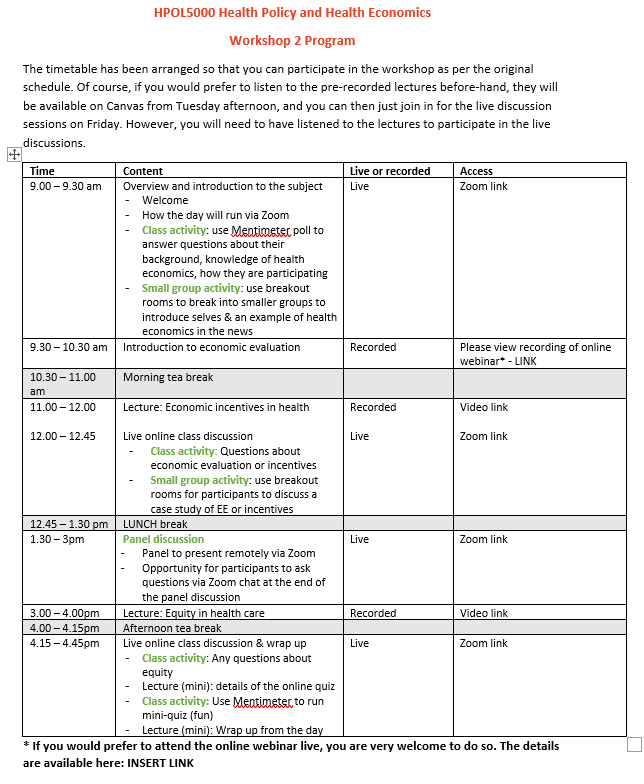

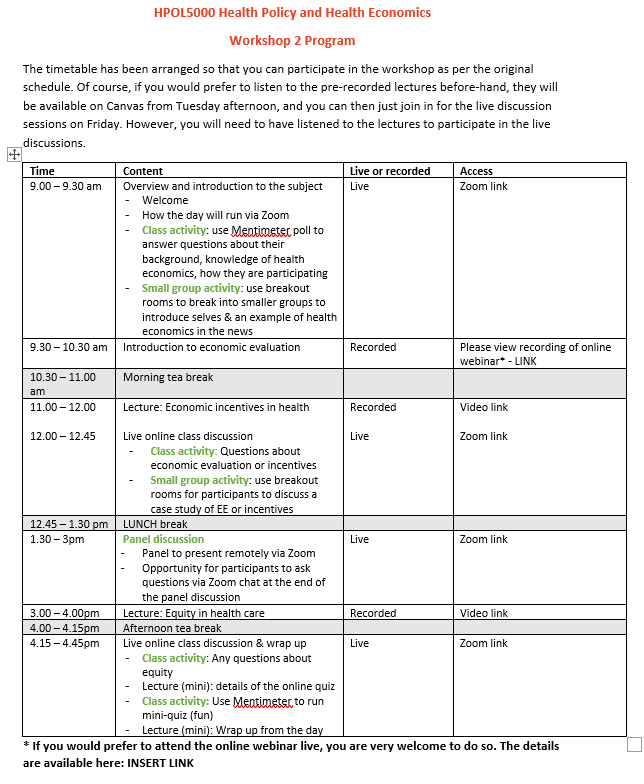

We decided to run the workshop on the day it was scheduled, but with some tweaks for online delivery. We arranged a mixture of pre-recorded lectures and interactive Zoom sessions, and scheduled them all in a timetable similar to what students would have followed for the face to face workshop (see timetable at bottom of post).

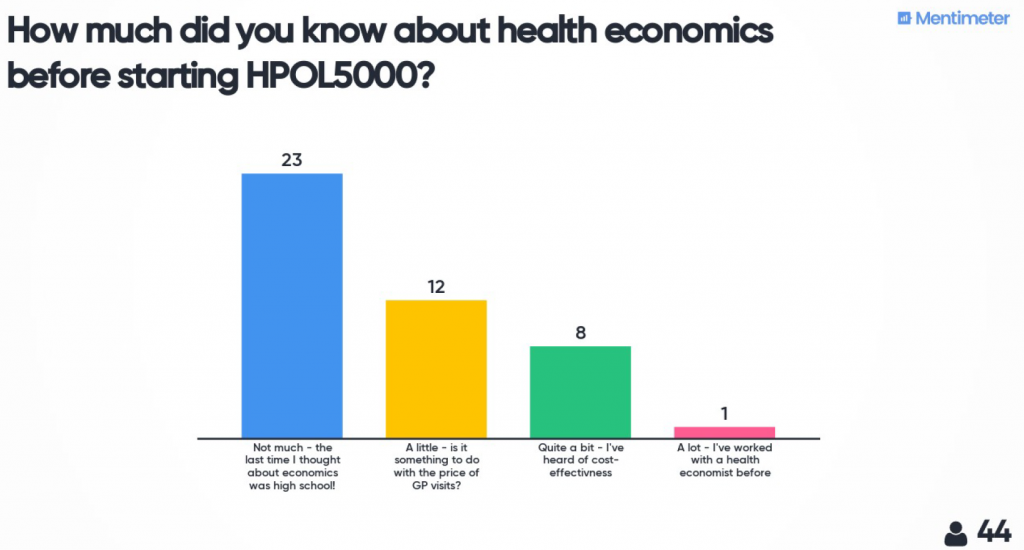

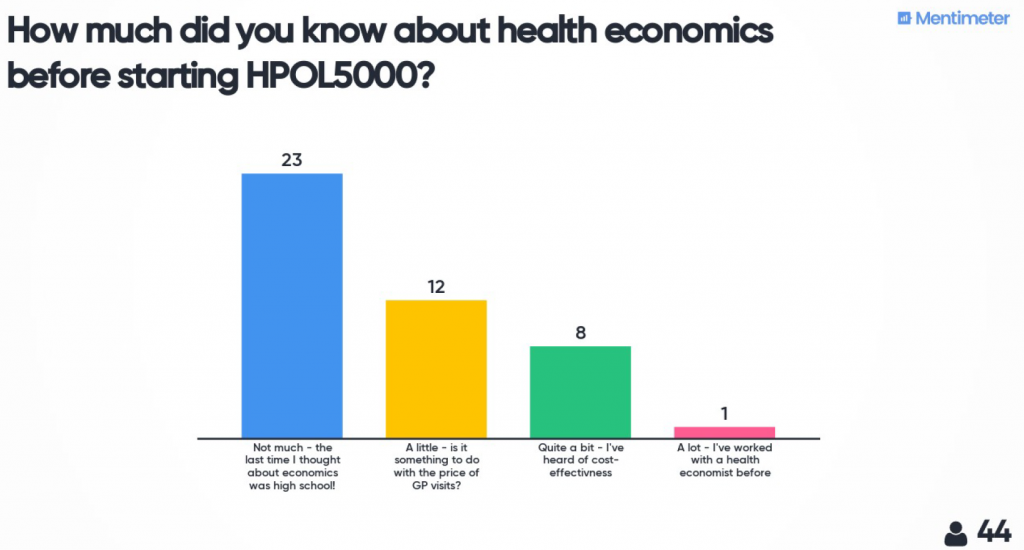

The day started with a live Zoom meeting to introduce myself, the material and how the day would run. I used Mentimeter to do some quick polls and word cloud activities to find out a bit more about the students who were participating.

The three planned lectures were pre-recorded and uploaded for students to access a week before the workshop. This allowed students to choose if they wanted to do the full workshop day as programmed, or access the lectures in the week before and just attend the live sessions on the day. Using pre-recorded lectures instead of doing them all live also gave me time on the workshop day to prepare for (and recover from) the more interactive sessions during the day.

Each lecture was allocated a time during the day when students could go off and watch it (if they hadn’t already) and then a zoom meeting was held afterwards for discussion, questions and some interactive activities. For the interactive activities I used Mentimeter tasks as well as Zoom breakout rooms to encourage student interactions with each other. One of these sessions worked well and one didn’t – being more organised to make sure students had access to the material for the small group discussion outside of Zoom would have been really helpful (I ended up telling students to take a screenshot/photo on their camera of the exercise on the screen so they could refer to it in the groups!)

We also had a panel discussion session. When run in the face-to-face workshop this is usually very popular with students, and I was really pleased with how it ran online. We used a meetin

g rather than a webinar Zoom meeting and this worked fine. As with the rest of the day the students were really helpful with their cameras and microphones etc, and we had good interaction via the chat function with people asking questions.

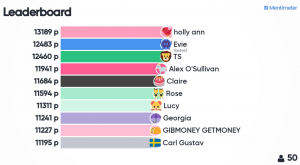

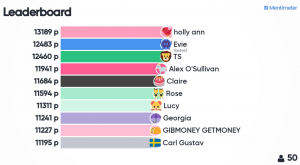

In the last interactive session of the day I used a Mentimeter quiz to check concept understanding. I had feedback that this was one of the best bits of the day. There were 5 questions about each of the 3 main topics we had covered, and the questions were designed to be relatively easy, but students only had 10 seconds to answer each one. A leader board was shown at the end of each set of 5 questions to generate a feeling of competition, and it was simple to set up.

Student feedback:

We had a lot of positive feedback about the workshop. A quick evaluation (done via Mentimeter) at the end of the day showed the Panel Discussion session and Quiz were both very popular. When asked one thing they found confusing or unclear, many people mentioned that Zoom was unstable sometimes, and in particular the breakout room activities were rushed. So next time I will allow much more time for those, and make sure I have a second person on hand to help manage the logistics. Overall the comments were positive, and made the whole experience worthwhile. Some examples:

- “It was actually a really effective alternative to a face to face day. The timetable with spaced out live webinars kept me on track with time”

- “The panel was really great to see the concepts we’ve gone through in the lectures and readings from a professional perspective. I’ve really enjoyed the health economics side of this course more than anticipated so thank you for this lovely teaching”

- “The panel discussion… the experts we had onboard really enriched and contributed to the learning process”

- “Quiz time is really useful to review”

- “Being able to snack the entire time while listening to everyone!”

Overall, using a mixture of tools and activities was helpful to keep students (and myself!) interested and engaged. A whole day of Zoom was a lot, and I think multi-day workshops would need to be extra diligent about giving appropriate breaks, making pre-recorded material available beforehand, and mixing up the type of interaction. For a large group like this having a second person online to help with coordination and admin would be great. But, I would absolutely run a workshop like this again in the future, although hopefully with more than a week to prepare!

My top tips:

Zoom:

- I am still not sure whether using one zoom meeting for the whole day (which is what I did) is better than setting up a separate zoom meeting for each interactive session. Different meetings would allow different settings for each session (e.g. a webinar for the panel discussion), but also means students need to log into the right room at the right time.

- I made a slide to display on the screen in between sessions, which was helpful.

- I wish I’d recorded every session to share with students who couldn’t join on the day. I now know that you can record multiple sections of a Zoom meeting and each downloads as a separate file.

- I made sure I had a clear place nominated on Canvas and mentioned first thing in the morning where students should go for information if something went wrong with the technology during the day (e.g. I’ll post here [LINK] on Canvas, and I’ll send an announcement)!

Break out rooms:

- Using the random allocation setting was easy and meant students mixed

- They take time for students to join and introduce themselves, so allow extra time

- Need to ensure students know what they need to do and can still access materials while in the breakout room – either pre-send slides or use Mentimeter

- It would have been great to have a second ‘admin’ person who could manage the logistics of putting people in rooms so I could circulate through the rooms contributing to the discussion, more like the face to face setting.

- Err on the side of having slightly larger groups than you think, because some students sign in and then turn of camera & mic and don’t participate. Suggest 4 as the minimum (likely then to get at least 2) and up to 6 or 7 still works ok.

Chat function:

- It’s difficult to monitor while you’re presenting, but…

- I’ve seen some really nice examples of students using it amongst themselves to share links and clarify content during a lecture.

Mentimeter:

- Was a great way to get engagement from a large class – much more flexible than ‘raising hands’ in class or polls within Zoom

- The quiz with the leaderboard was fun! The only problem was not being able to give away small prizes (e.g. chocolate frogs etc) that would usually happen in a face-to-face setting. I’ve been trying to think creatively about what might replace this – perhaps the winner gets a link to my favourite health economics GIF?!

Security:

- I didn’t have any problems with security or inappropriate behaviour, although in one lecture I’ve given subsequently a student started sharing their screen of them playing a computer game during one of the breaks. But, I now add a password to most zoom meetings by default, and for any larger group meetings I think I would always try to have an administrative person online who could handle stuff like that while I’m teaching.

Timetable